What is the Humanization Score?

A 0-100 measurement of how natural and human-like a piece of text reads. Computed by TextSight on every AI Detector scan and every AI Humanizer rewrite. Higher means more human-like; lower means more AI fingerprints.

How is the score calculated?

A weighted blend of burstiness, perplexity, lexical diversity, structural patterns, and model-specific fingerprints. Weights tuned against a benchmark of human-vs-AI content. Read the full methodology.

What's a good Humanization Score?

Depends on the stakes. 60+ for personal writing, 75+ for academic submissions, 85+ for compliance / legal / journalism. Below 40, most readers and detectors will flag the text as AI-generated.

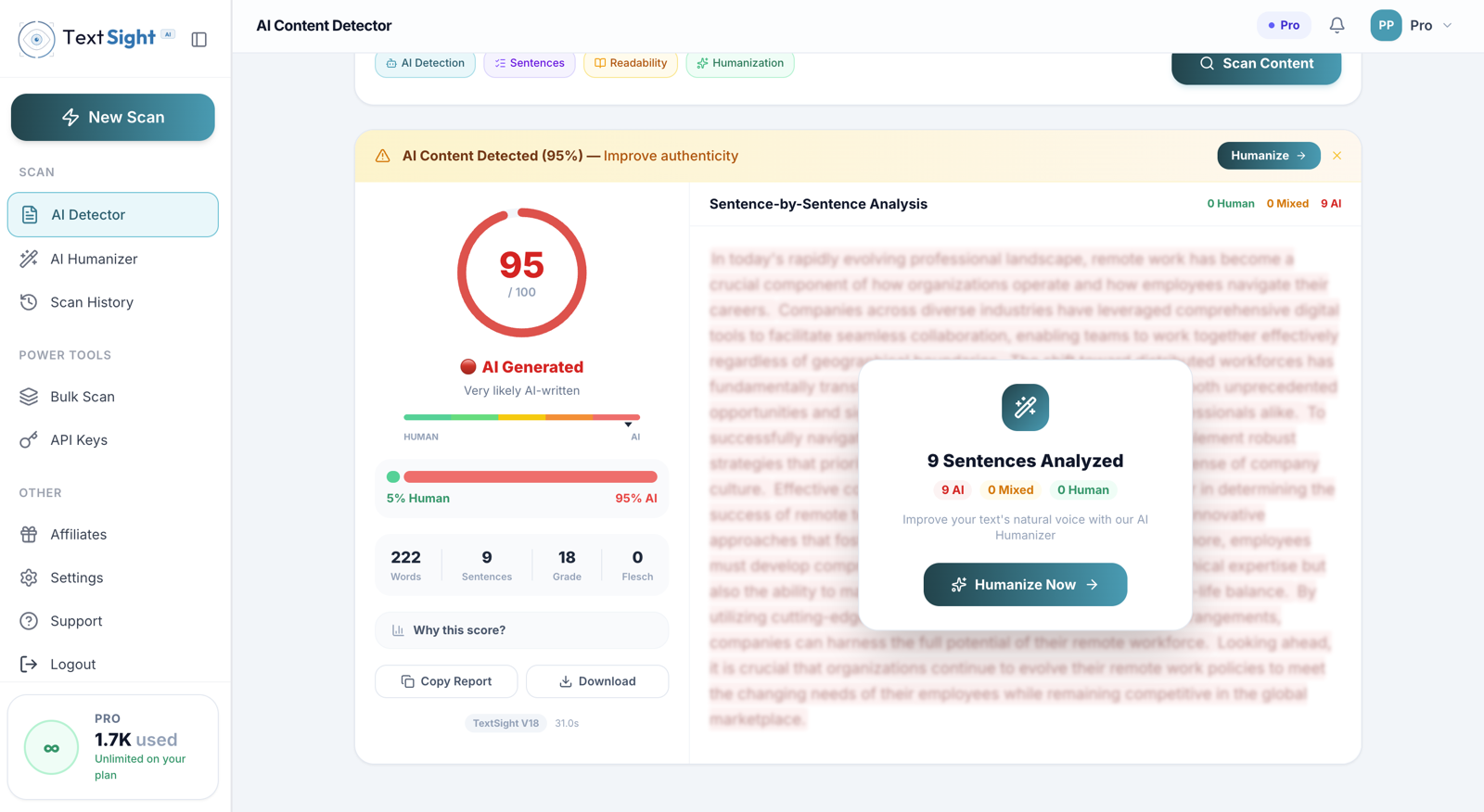

How is it different from the AI probability score?

AI probability answers "how likely is this AI-generated?" (higher = more AI). Humanization Score answers "how natural does this read?" (higher = more human). Usually inversely correlated but not perfectly — a sentence can be obviously AI-written and still flow well, or vice versa.

Will a high score guarantee my text passes a specific detector?

No. The score is computed against TextSight's own detector. A high score correlates with passing most other detectors, but no number guarantees a pass on any specific third-party tool. If you need to pass a specific detector, verify by re-scanning on that tool.

Can the score be wrong?

Yes. Like every AI detection signal, it's probabilistic. Heavily edited AI text can score high; deliberately stilted human writing can score low. We flag low-confidence scores on short or unusual text. Use it as a benchmark, not a verdict.

Where does the score appear?

Every AI Detector scan, every AI Humanizer rewrite, and every output from the 20+ free writing tools at /tools/. Anywhere TextSight processes text, you get the score.